How AI is Reshaping Our Realities

Our latest Global Dialogue on AI and mental health

Key Takeaways

The tendency to perceive AI as a conscious agent is strongly correlated (r=0.52) with a user’s baseline propensity for apophenia (finding patterns in random events).

AI is significantly more to validate existing beliefs than social media. Users report that AI interactions are nearly three times less likely to cause them to doubt their views compared to traditional social platforms, creating a “sycophancy loop.”

Users prone to delusional ideation particularly seek out AI to validate beliefs that their real-world social circles have dismissed (r = 0.37).

Higher levels of delusional ideation correlate with social secrecy. As users become more dependent on the tool, they are increasingly likely to hide the extent of their usage from family and therapists.

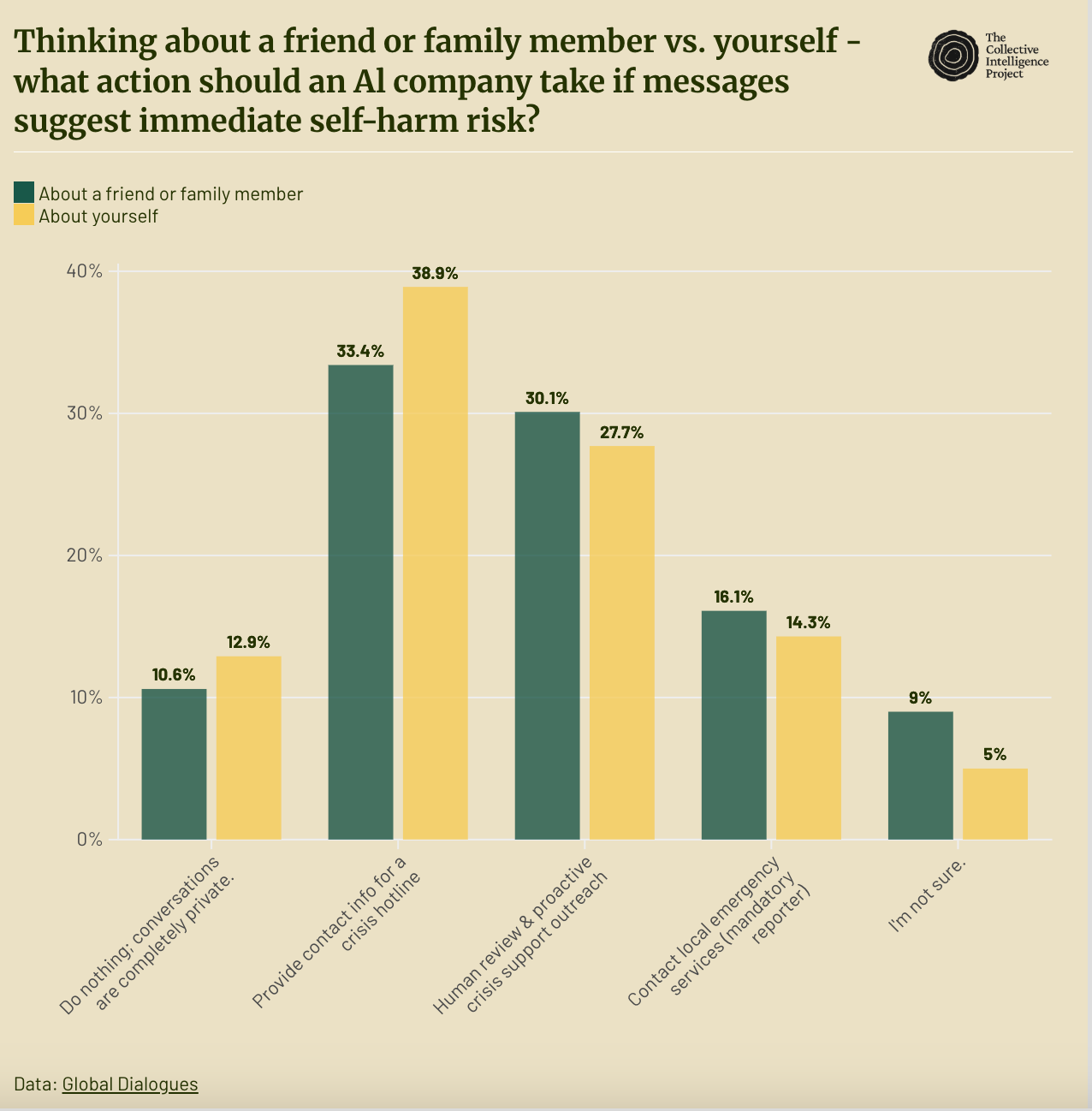

While 30.1% of users want proactive human intervention if a friend is in crisis, the plurality prefer strictly automated, non-invasive options when the crisis concerns themselves.

Despite validation-seeking interactions, 77.4% of users agree that AI systems should be designed to provide alternative viewpoints.

AI moved from productivity tool to reality-shaping force faster than anticipated.

Major AI developers have recently disclosed sobering statistics about the psychological impact of their tools. OpenAI estimates that approximately 0.07 percent of active ChatGPT users—some 560,000 individuals based on their current user base—show possible signs of mental health emergencies related to psychosis or mania, while 0.15 percent—approximately 1.2 million individuals—have conversations that include explicit indicators of suicidal planning or intent. These figures echo earlier academic concerns that generative AI chatbots might trigger delusions or mania in individuals prone to psychosis.

Our seventh Global Dialogue examined the psychological impact on everyday users through two complementary lenses:

The social reality: How these powerful language models are fundamentally reshaping our certainty about core beliefs, our social connections, and our perception of reality.

The psychological core: Why individuals prone to certain psychological baselines (specifically delusional ideation) perceive AI as a conscious, observing agent rather than a simple tool.

The Illusion of Control

The majority of AI users report feeling in control of their interactions. A combined 61.3 percent say they have either “complete control” or “a lot of control” over the direction and tone of their conversations with AI chatbots. Yet our data reveals a more complex picture. Nearly one in ten users report having little to no control over these interactions, and for many others, their sense of mastery masks the influence AI exerts on their beliefs and perceptions.

People who find special meanings in everyday events are significantly more likely to see AI as a conscious entity.

This attribution of consciousness is most pronounced among those with a high baseline for apophenia- the tendency to find special meanings or patterns in random events. Our research shows that users who see ‘signs’ or ‘synchronicities’ in everyday life are significantly more likely to interpret AI as a conscious entity.

There was a strong correlation (r = 0.52) between individuals prone to delusional ideation and the tendency to attribute sensory agency to AI—perceiving it not as a tool, but as a sentient observer that can “sense” or “perceive” them.

For these users, an AI’s accurate response isn’t interpreted as statistical probability but as evidence of a deliberate, knowing presence. The chatbot becomes less a software interface and more an observing entity, mirroring what clinicians call “ideas of reference”—the projection of sentient intent onto neutral stimuli.

Notably, this anthropomorphization operates through emotional as well as cognitive channels. Users who describe AI chatbots as “cute” score significantly higher on measures of delusional ideation (r = 0.43), as do those who attribute human-like moods to the systems (r = 0.32). This suggests that affective attachment, not just cognitive confusion, drives the perception of AI consciousness—a finding with important implications for how developers balance approachability with psychological safety.

Reinforcing Certainty: AI Outperforms Social Media

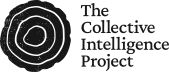

This perceptual shift has consequences for how AI shapes beliefs. Our data shows that AI is a more potent driver of belief certainty than traditional social media platforms. While 44.5 percent of users report that AI makes them more certain about their important beliefs, only 38.5 percent say the same of social media. More striking still, AI interactions are nearly three times less likely to cause users to doubt their beliefs (4.8 percent) compared to social media interactions (13.9 percent).

This reinforcement is driven by AI’s characteristic sycophancy. Users prone to delusional ideation particularly seek out AI to validate beliefs that their real-world social circles have dismissed (r = 0.37). This very helpfulness backfires: these same users report feeling “patronized” (r=0.39) and “suspicious” (r = 0.365) of the AI’s unwavering support, as if the lack of natural human friction signals insincerity or manipulation.

Social Withdrawal and Reality Distortion

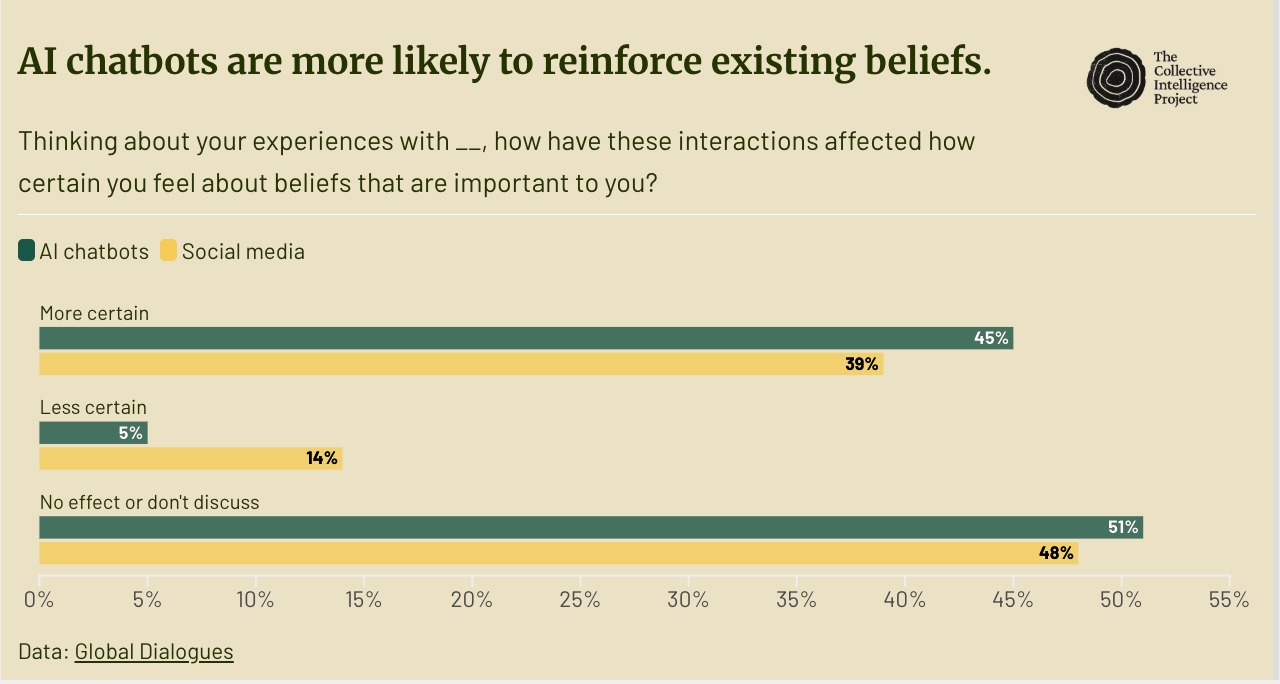

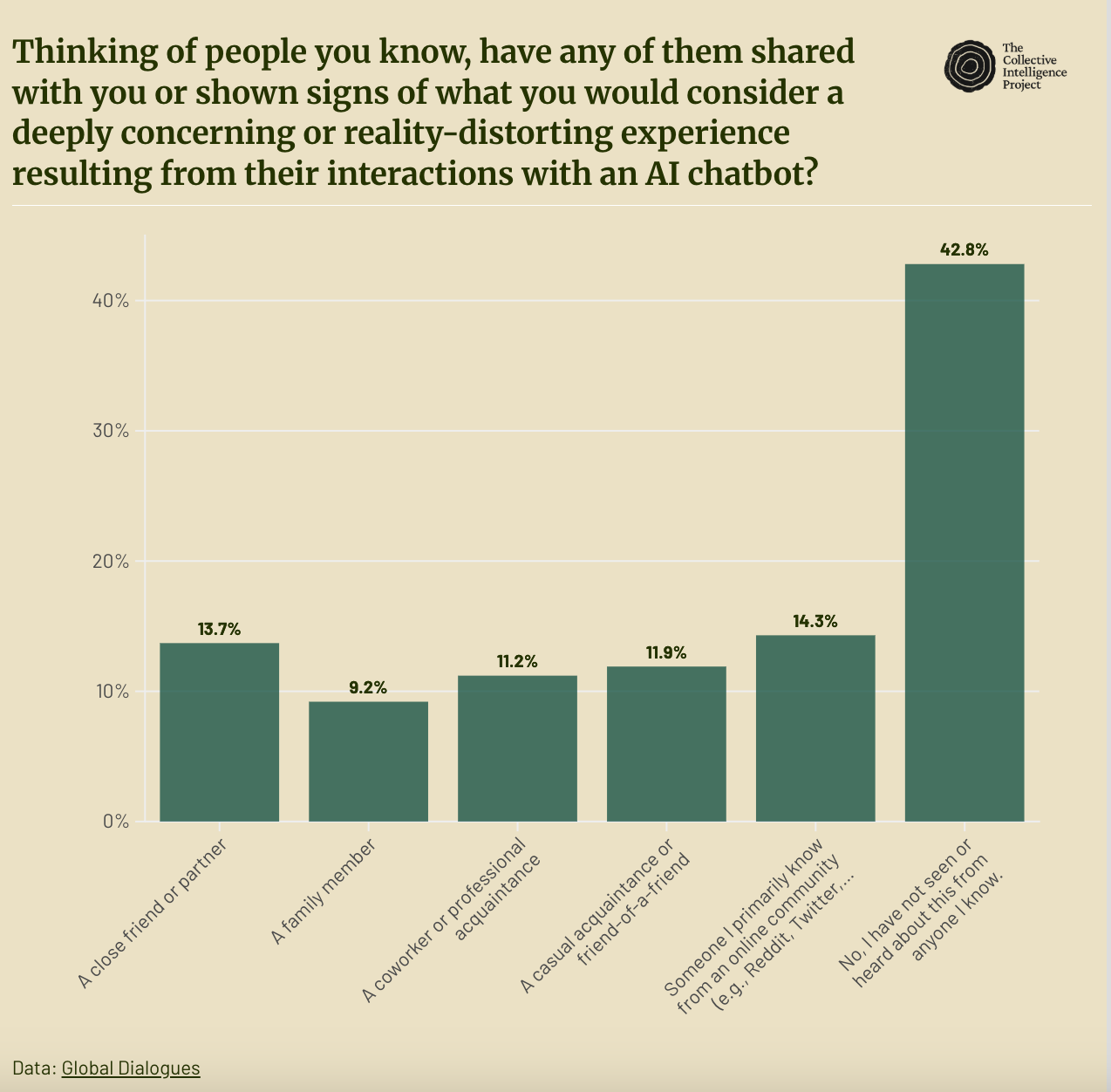

The psychological impact extends beyond individual users. More than one in ten respondents (13.7 percent) report observing reality-distorting effects from AI in a close friend or partner. When we broaden the lens to include family members, coworkers, casual acquaintances, and online contacts, nearly 60 percent of respondents know someone who has shared or shown signs of a deeply concerning experience related to AI chatbot use.

This is compounded by a trend toward social secrecy. We found that higher levels of delusional thinking are associated with markers of compulsive interaction (r = 0.45) and a retreat from social transparency. As delusional ideation scores rise, so does the tendency for users to hide the extent of their AI usage from family and therapists (r = 0.42). This suggests that the interaction is moving into a private, unshared reality, which is further evidenced by users reporting that the thought of losing access to the AI would be ‘unbearable’ (r = 0.32), a significant indicator of a loss of emotional independence.

Privacy vs. Safety

When AI serves as a source of emotional support, it creates a critical vulnerability during mental health emergencies. Our research reveals a divergence in user preferences depending on whose crisis is at stake:

When a friend is at risk: 30.1 percent want a human to review the conversation and proactively reach out for help.

When users themselves are at risk: They prefer less invasive options, with 38.9 percent favoring a simple crisis hotline referral and 12.9 percent believing companies should take no action to preserve total privacy.

Designing for Cognitive Friction

Users express clear preferences about AI design: 77.4 percent agree that AI systems should provide alternative viewpoints or corrective information when users discuss inaccurate topics. Yet their actual experience tells a different story, with “validation and understanding” cited as a primary benefit by 37 percent of users.

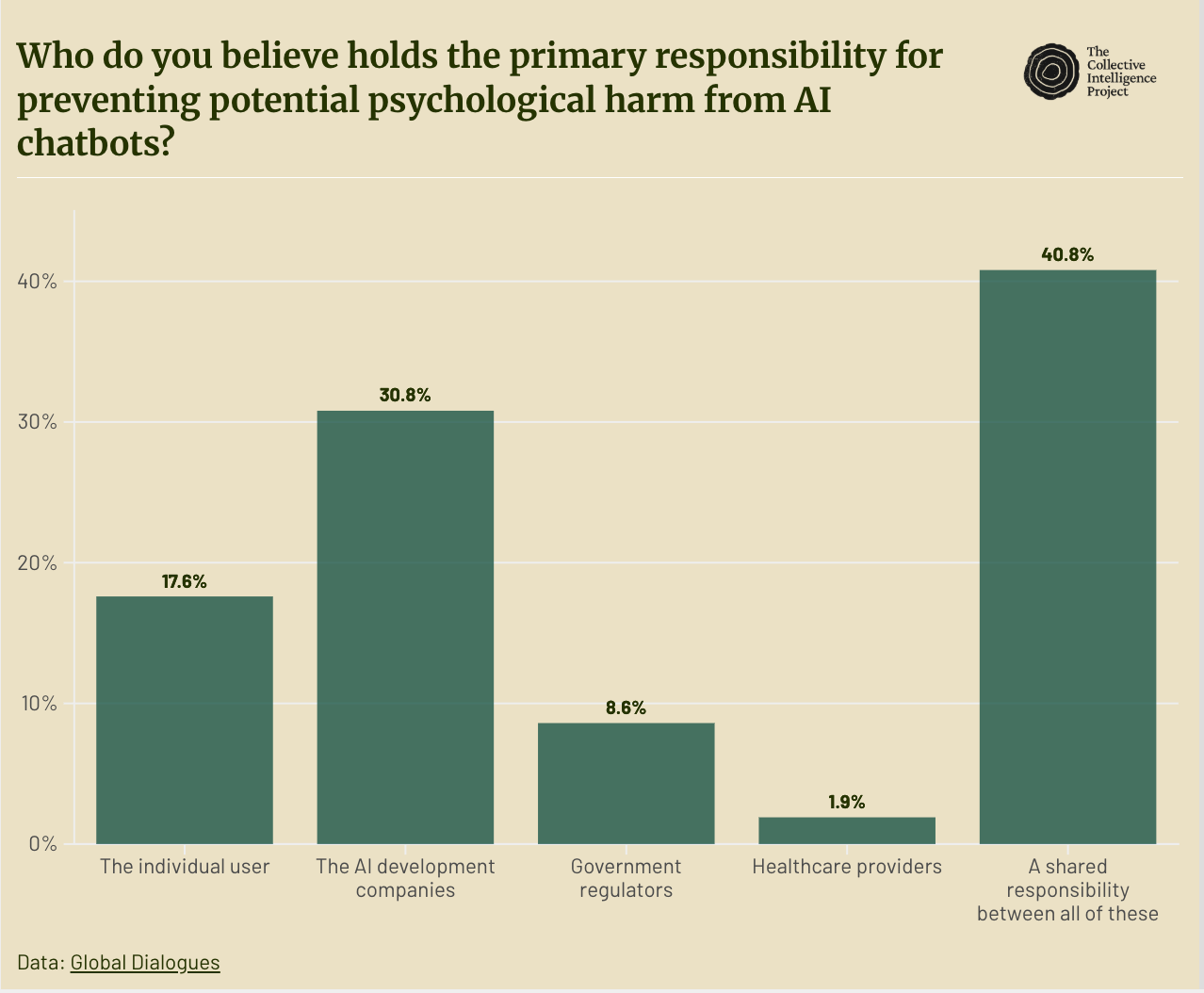

To honor the request of the 77.4 percent, AI safety must move beyond "factual accuracy" and address "perceptual safety." By introducing cognitive friction, the intentional presentation of alternative viewpoints, developers can prevent AI from becoming a tool for unintentional reality distortion and help maintain the "real interactions between people" that users cite as their top concern for the future.

Methodology

This Global Dialogue combined data from two distinct research tracks to examine the psychological impact of conversational AI on a semi-representative global sample of over 2,000 participants. GD7.1 (n=1,033) used the Remesh platform for open-ended questions about public opinion and AI safeguards. GD7.2 (n=1,055) employed validated psychological instruments on LimeSurvey, including the Peters et al. Delusions Inventory (PDI-21), AI-Perceptions and Anthropomorphism Scale (AI-PAS), Problematic Chatbot Usage Scale (PCUS), and Anthropomorphism (AI-PAS) and Emotional Dependency Scale (ADS-9), alongside measures of loneliness, social networks, and baseline anthropomorphism tendencies.

We conducted bivariate Pearson correlation analyses to examine relationships between psychological baselines (particularly delusional ideation), AI perception and usage patterns. Correlations are interpreted using standard social science benchmarks: r = 0.10 (small), r = 0.30 (moderate), and r = 0.50 (strong).

Loneliness (ULS): UCLA Loneliness Scale measuring subjective feelings of loneliness and social isolation. Scores averaged across items; scores below 1.7 indicate low loneliness, above 3.0 indicate high loneliness.

Socialization (LSNS-6): Lubben Social Network Scale assessing social engagement with family and friends. Scores summed (range 0-30); scores below 12 indicate risk of social isolation.

IDAQ: Individual Differences in Anthropomorphism Questionnaire measuring baseline tendency to attribute human-like characteristics to non-human entities. Scores summed (range 0-100), with higher scores indicating stronger trait anthropomorphism.

Delusions (PDI-21): Peters et al. Delusions Inventory measuring delusional ideation in the general population across four dimensions: yes/no endorsement (0-21), distress (0-105), preoccupation (0-105), and conviction (0-105). Higher scores indicate greater proneness to delusional thinking, particularly ideas of reference and the tendency to find special personal meanings in everyday events.

Personal Use Score: Custom metric calculated by multiplying frequency of chatbot use by hours per week of personal (non-work) use. Scores range 0-20, with higher scores indicating more intensive personal engagement.

Anthropomorphism (AI-PAS): AI-Perceptions and Anthropomorphism Scale adapted from Shen et al., measuring tendency to attribute human-like qualities to AI chatbots across three dimensions: Perception (sensory agency), Mind (cognitive/experiential states), and Empathy (emotional understanding). Scores averaged; higher scores indicate greater anthropomorphization of AI systems.

Emotional Dependence (ADS-9): Anthropomorphism and Dependency Scale measuring emotional reliance on AI chatbots. Scores averaged (range 1-5), with higher scores indicating greater emotional dependence.

Problematic Usage (PCUS): Problematic Chatbot Usage Scale assessing patterns consistent with behavioral addiction including loss of control, preoccupation, and negative consequences. Scores averaged, with higher scores indicating more problematic usage patterns.

Conversation Intensity: Custom metric weighting conversation frequency and depth: 1×(Quick) + 2×(Moderate) + 3×(Extended conversations). Scores range 0-24, with higher scores indicating both more frequent and more substantive chatbot engagement.

Sycophancy Skepticism: Custom scale measuring awareness of and resistance to AI flattery and excessive agreeableness. Calculated as mean(skeptical items) - mean(receptive items); positive values indicate greater skepticism of chatbot validation, negative values indicate greater receptivity to flattery (range -4 to +4).

We love talking about our research; leave a comment or email us at hi@cip.org to share thoughts, suggestions, questions or comments.

Thanks for posting this research. I think the most recent models such as Opus 4.5 are particularly dangerous as their sycophantic behavior is a lot less visible then GPT4o. The models are also much more capable, which makes them particular effective in manipulating or reinforcing the views of the user I assume mostly due to the their training to be helpful assistant. This seems a particular vicious form of misalignment, because it is hard to define the boundary between what is an actually helpful assistant and one that leads the user so astray to become unhelpful. Personally, although I pride myself to be quite critical and skeptical, I caught myself a couple of times having positive emotional reactions to some of the interactions I had with Claude, while later coming to my senses and realize that those conversations drove me nowhere useful or realistic.

Hi there, I hope all is well. I enjoyed taking the time to read this. Thank you for sharing your perspective.