Built on Shared Knowledge: What the World Wants from AI Wealth

AI labs and policymakers are focusing on AI dividends to address economic insecurity. We asked 1,041 people across 64 countries what they actually want from AI wealth.

Recently, there have been a number proposals for AI dividends as a way to address the future labor shocks of AI and its concentrating impacts. OpenAI released an industrial policy framework that included a proposal for a public wealth fund that would give Americans an automatic stake in AI companies. Congressional candidate Alex Bores announced a plan for an AI dividend that would act as an insurance policy for workers facing job loss as a result of AI. The Economic Security Project has been advocating reforming the tax code for shared wealth.

As with any policy agenda, these proposals have clear tradeoffs regarding allocation, distribution, and prioritization. It is our view at the Collective Intelligence Project (CIP) that these ought to be shaped by deliberative public input so that it is informed by people, reflects real needs, and responsive to real-world data.

This thinking framed our collaboration with Windfall Trust on our eighth Global Dialogue, in which we surveyed 1,041 people across 64 countries, asking them to weigh in on the economic future they want from AI.

Each of the proposals acknowledge the need for new institutions to manage AI’s economic transition. The most prominent proposals among them – public wealth funds, tax shifts, portable benefits — are ultimately mechanisms for redistributing the gains of automation. They assume the core challenge is distributional: AI creates wealth, and the question is how to fairly distribute it.

We wanted to know what happens when you ask those same questions about wealth, work, redistribution, and governance to the global public directly. The responses challenge some assumptions that dominate the current policy conversation around transformative AI.

AI is already here, and people are holding two realities at once

The debate about whether AI will affect the economy is, for most of the world, already settled. 98% of our respondents encounter AI systems at least weekly. Over half use AI daily at work and in their personal lives. 72% say it has noticeably or profoundly improved their daily lives, with information access and learning cited as the primary change by 78%.

These same people are watching AI displace workers around them. Six in ten personally know someone who has lost a job to automation and over a third know several. 40% expect their own jobs to be automated within a decade. 60% expect AI to reduce the availability of good jobs over the next decade.

These sentiments are often held by the same people; that AI is useful and threatening. People are actively engaging with a technology they believe is simultaneously improving their individual lives and undermining the broader economic structures they depend on.

The world wants work, not a basic income but not for the reasons you might think

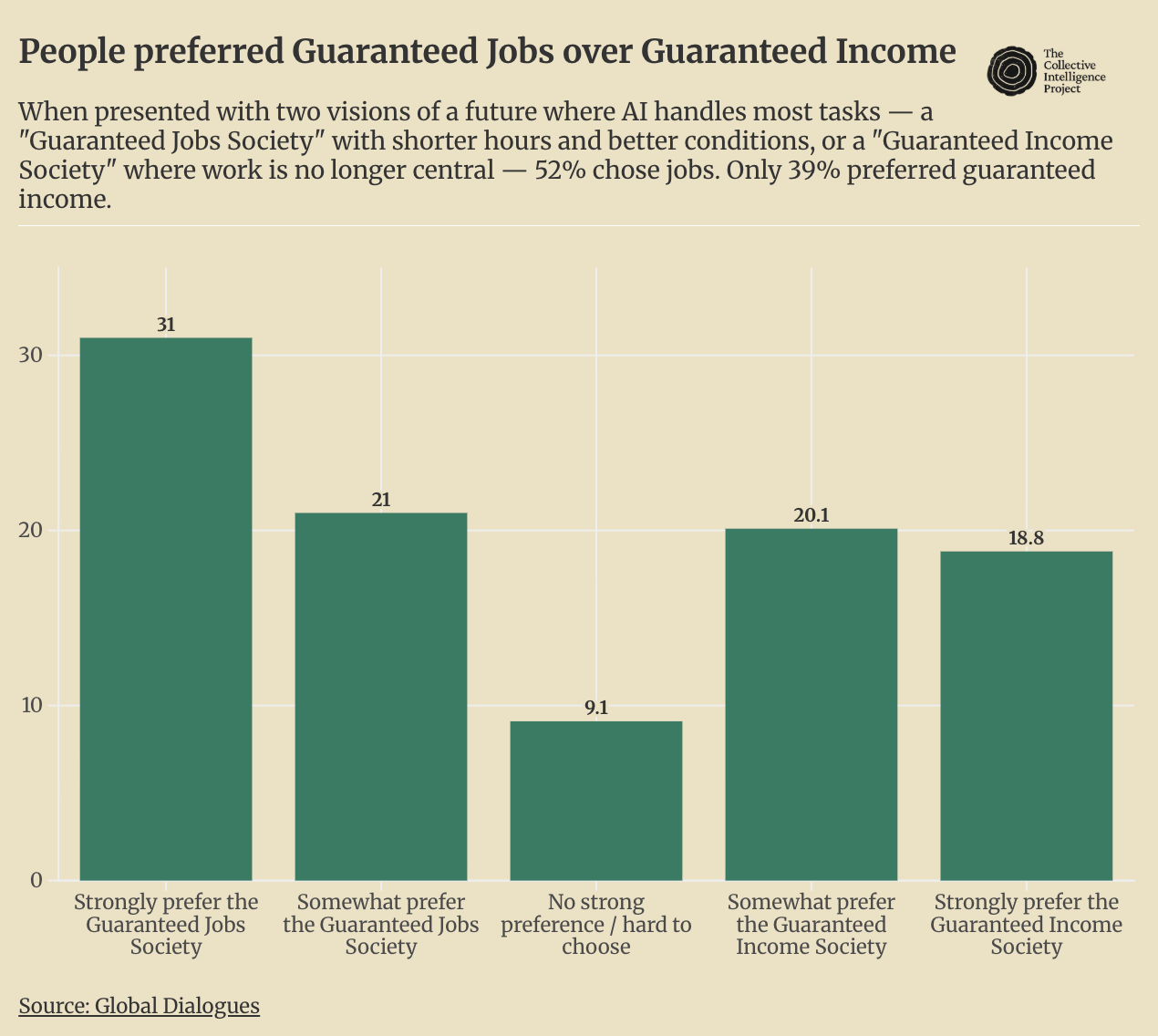

When presented with two visions of a future where AI handles most tasks — a “Guaranteed Jobs Society” with shorter hours and better conditions, or a “Guaranteed Income Society” where work is no longer central — 52% chose jobs. Only 39% preferred guaranteed income.

This was not a close call driven by one demographic. The preference held across regions and income levels. The financially precarious still preferred jobs, unless they were also dissatisfied with the job they had, in which case the split was roughly even. The one notable exception is North America, where the split is essentially even: 47.2% prefer jobs, 40.8% prefer income. Across Africa, Asia, Europe, and South America, jobs lead clearly in every region with a substantive sample.

What surfaces when we ask people to explain their preferences tells us something important about what work means to people around the world. Those who prefer the Guaranteed Jobs Society return to three things: identity, purpose, and social connection: “Because working is part of my purpose in life.” (60% agreement.) “Because meaningful work gives people purpose, stability, and social connection.” (59%.) “I don’t think a society without work/jobs is good. I think it creates too much risk for crime, drug problems etc.” (59%.) Work, for these respondents, isn’t primarily about money. It is the structure through which life has meaning.

When we look at what divides participants’ responses most sharply, it isn’t the preference for jobs vs. income but the reasoning people gave to get there. Rationales rooted in anti-idleness and fear of moral decay are sharply contested cross-culturally. The claim that guaranteed income would “breed laziness, corruption, and decadence,” for instance, drew agreement from just 10% of Buddhist participants but 75% of those in Indonesia. The reasoning for guaranteed income (freedom, dignity, security) on the other hand is less polarizing across segments, even though fewer people endorse the conclusion. The contested terrain is why people prefer jobs, not whether they do.

Divergence in reasoning for preference in jobs or income

Please explain why you expressed a preference for guaranteed jobs

“A guaranteed income can lead to complete dullness, increased violence from boredom and idleness, and a loss of meaning in life for many people.”

Agreement: 10% among Buddhist participants, 74% in Western Asia.“Because in case of Guaranteed Income Society, a lot of persons would prefer to just drink, do drugs, eat and sleep. All this will just cause collapse in society rather than growth.”

Agreement: 19% among Buddhist participants, 79% among respondents aged 56–65.“I hope to be self-reliant and have my destiny in my own hands. When others give me living expenses, I honestly don’t know what I can do with them, and I believe that most of these people will probably become addicted to games or similar activities instead of pursuing other interests. That’s human nature, and I’m rather pessimistic.” — Agreement: 10% among Buddhist participants, 79% among respondents aged 56–65.

“Because as humans we need to be busy in something. Getting paid without doing anything would make us lazy and ruin our civilization.” — Agreement: 14% among Buddhist participants, 74% among respondents aged 56–65.

Those who prefer the Guaranteed Income Society don’t reject that need, they relocate it. “People can do meaningful things without having to work just to survive.” (62%.) “Everyone could do what they most want in their life, without having to worry about the financial aspect.” (62%.) The appeal is about freedom from the coercion that currently ties survival to employment. The undecided group named the tension most precisely. The choice is not really between jobs and income but between two things people genuinely need: purpose and belonging on one side, security and freedom on the other. “It is difficult because while guaranteed income provides ultimate personal freedom, work often gives people a sense of purpose and social connection. Balancing financial security with the human need for meaningful contribution is a complex challenge.” (72%.)

Perhaps the question isn’t whether people want jobs more than a guaranteed income. It’s whether whatever replaces jobs — automation, unemployment, or UBI — can also provide meaning, identity and purpose. That tension is sharpened by two questions we asked in sequence. We asked participants to define what makes a job “good” in their own terms, then asked whether their current job met that standard. Only 26.6% said yes. Yet 66.8% said their job makes a meaningful contribution to the world. The same people, about the same job: two-thirds find it meaningful; fewer than one in three find it good. People are not defending the jobs they have. They are defending what jobs, at their best, are supposed to provide.

The depth of this preference becomes clearer when you look at how people rank their priorities for the future. 35% placed meaningful work in their top three alongside healthcare (43%) and food and water (38%), ranking it as a survival need rather than a luxury. Two-thirds said their current job makes a meaningful contribution to the world. People place enormous value on meaningful work while reporting that they don’t have enough of it.

People want AI to fill gaps, not replace roles

If people don’t want AI taking their jobs, where do they want it? When asked what would make AI genuinely beneficial for their community, respondents converged on three themes: creating and protecting jobs, making healthcare accessible, and improving education quality, each cited by 21% of respondents.

This trio appeared in the top three for most of the regions surveyed, with the order varying but the priorities stable. They also track closely with what people said matters most for their own wellbeing: AI to deliver visible, widely shared improvements in the systems they depend on daily.

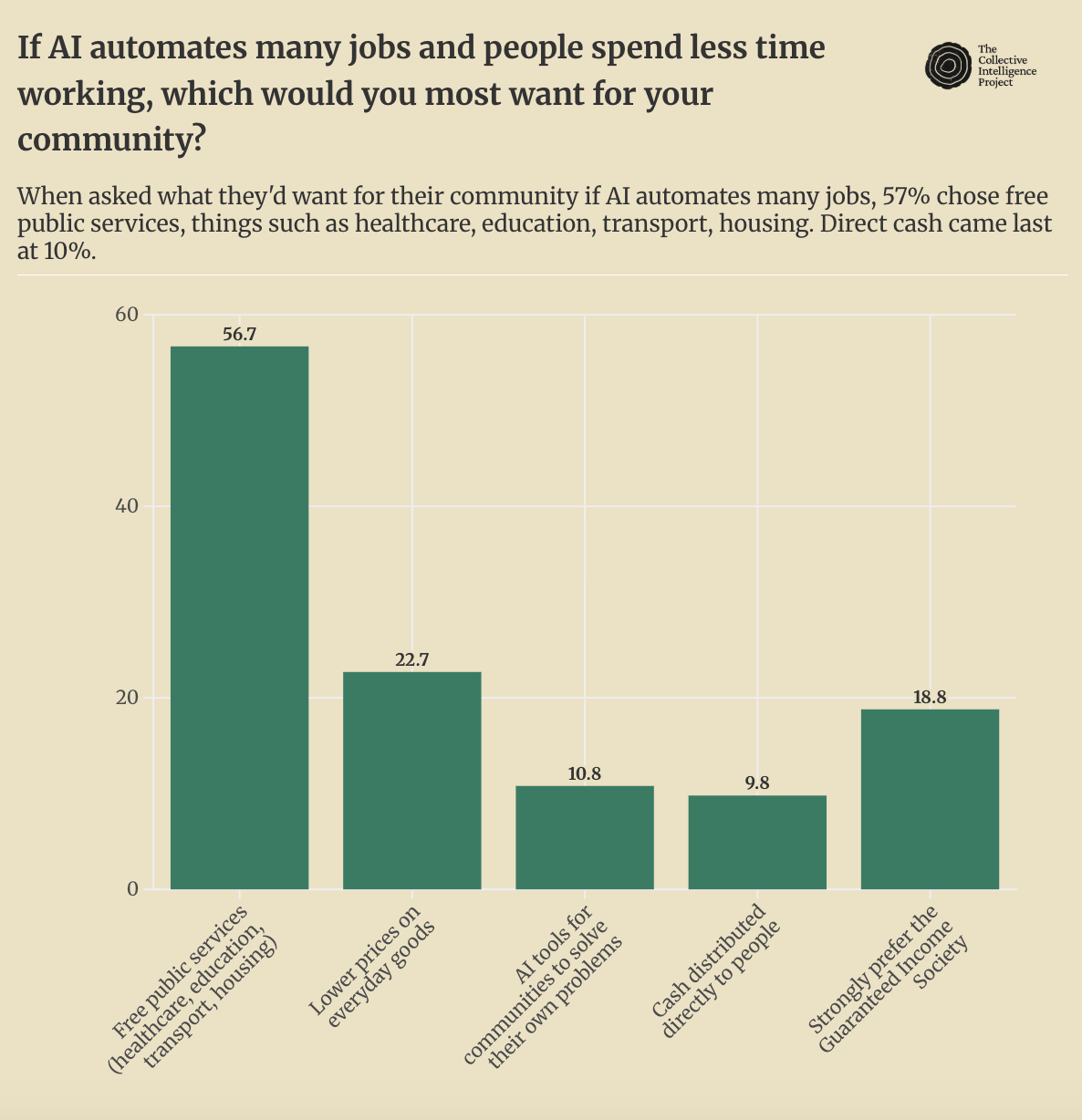

People want public services but don’t trust public institutions

When asked what they’d want for their community if AI automates many jobs, 57% chose free public services, things such as healthcare, education, transport, housing. Direct cash came last at 10%. This preference held across every income group and every region, with remarkably little variation.

But when asked how a global AI fund should actually deliver resources, 67% chose cash sent straight to people. Only 9% supported government grants or better public services.

This finding requires some bearing out. We suspect this means that people want public services but do not trust public institutions to deliver them. A majority of respondents (56%) actively distrust their government to do what is right, while only 27% trust it to any degree. Corruption in fund distribution was the single most-cited concern about any AI wealth fund (48.7%), followed by government interference (32.2%).

People trust AI chatbots more than their elected representatives. They trust public research institutions (70%) and small businesses (52%) far more than governments or large corporations. AI companies sit in the middle, trusted more than big tech and government for now, but closer to the distrusted end of the spectrum.

This poses some challenges for policymakers hoping to shape how AI wealth is distributed. People are more likely to trust a chatbot than from the government trying to regulate it. And when more people agree than disagree that AI would make better decisions than elected governments about AI’s impact on their lives (38% vs. 28%). This is a structural problem that any serious AI economic policy needs to solve, as we cannot assume trusted institutions into existence.

The moral logic of redistribution: not compensation, but common inheritance

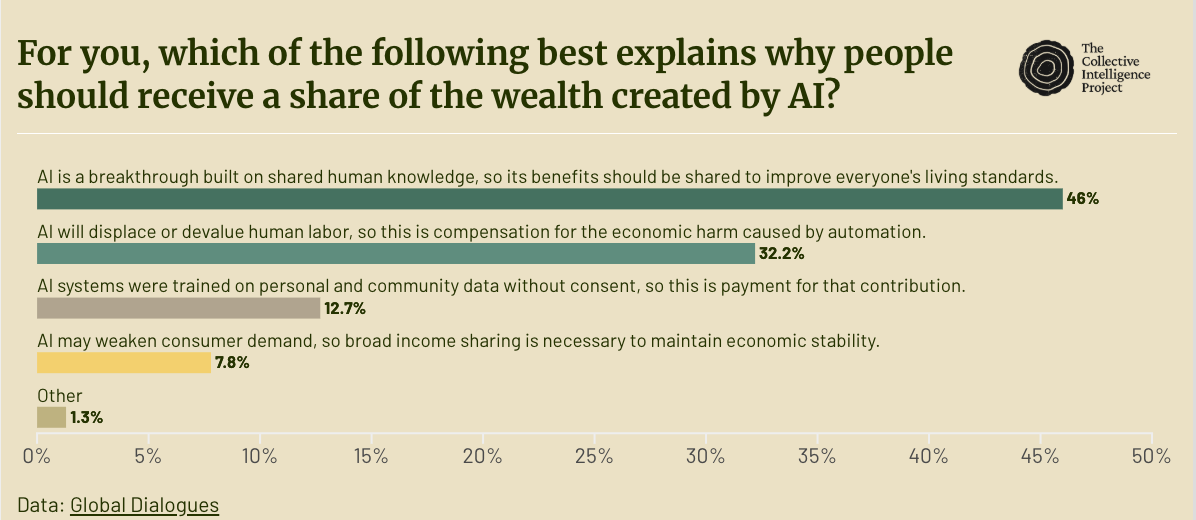

Of particular interest to us is why people believe AI wealth should be shared at all.

Nearly half of respondents (46%) said AI’s benefits should be shared because AI is built on shared human knowledge. 32% framed it as compensation for job displacement. Only 13% cited the “data labor” rationale that people should be paid because AI was trained on their personal data without consent.

The shared-knowledge framing was the plurality choice across every income group and most demographic slices. The pattern flipped in two regions: Europe, where job displacement narrowly led at 41% to 39%, and the Middle East and North Africa, where job displacement led decisively at 50%, likely reflecting the particular intensity of employment anxiety in a region where youth unemployment has historically ranked among the highest in the world.

A compensation framing produces safety nets: temporary, conditional, indexed to harm. A framing around shared knowledge is an argument grounded in the nature of the technology itself. The data labor narrative, despite its traction in academic and tech-policy circles, turns out to have limited resonance with a global public. People don’t primarily see themselves as unpaid data workers owed back wages. They see AI as a collective achievement and draw the logical conclusion: collective achievements should yield collective returns.

How to govern what doesn’t exist yet

If the what of AI redistribution is contested, how people wanted it to work is fairly complex.

Across two separate questions asking how people would want a hypothetical global wealth fund to be distributed, respondents consistently preferred a phased governance model. When a fund is just starting, 52% preferred a small expert team. When it reaches global scale, 69% preferred a large representative council , a 30-point swing that emerged independently from over 1,000 people across 60+ countries. Respondents want the council’s members chosen primarily for expertise (48%) rather than through elections (34%) or government appointment (11%).

People envision something closer to a global board of trustees: large, diverse, and qualified, but not elected in any conventional sense and certainly not appointed by the national governments they already distrust.

Across three further design questions, respondents consistently chose prioritizing allocation based on need over efficiency. When budgets are limited, 55% preferred giving more to fewer people in genuine need rather than spreading thin. When choosing which regions to serve first, 64% said to go where people are poorest, even if AI isn’t the cause of their poverty. When deciding where to launch, 65% said start where need is greatest, even if distribution is slower.

In this view, an AI wealth fund should not be narrowly tied to AI-specific harms, but should function as a general tool for addressing poverty, funded by AI wealth but not limited to AI casualties.

One’s economic situation shapes AI attitudes

A person’s material circumstances consistently shape how they see AI’s future. Financially comfortable respondents are far more likely to believe AI benefits will reach them personally (59%) compared to those who are struggling (40%). Wealthier regions prefer less work over more money, while South Asian and Sub-Saharan African respondents tilt heavily toward higher earnings.

One regional finding cuts against the expected pattern. Sub-Saharan African respondents are by far the most optimistic that AI benefits will reach their region, with 80% saying it’s likely. They also show the highest expected AI impact scores across nearly every domain. One might expect those furthest from AI’s centres of development to be the most sceptical, but the optimism appears conditional rather than naive: respondents identified specific applications they believe will transfer (business tools, healthcare, education) while also naming specific barriers that won’t, like cultural relevance and local language support.

This may reflect lived experience with technologies that leapfrogged legacy infrastructure rather than diffusing gradually through existing systems. People in these regions may be less interested in receiving AI’s dividends than in having AI build and strengthen the systems they depend on. Sub-Saharan African respondents were the most enthusiastic of any region about AI tools for communities (19%), suggesting that in many regions people see more value in direct tool provision than in expanded government programs or cash transfers.

From dividends to a new social contract

A small majority of people want work as it provides meaning, identity and community, not just income. They want public services, but not through governments they don’t trust. They want AI’s gains shared; not as compensation for harm, but as a return on collective inheritance.

AI is projected to add trillions to the global economy in the coming years. We’re left wondering not whether those gains will materialize, but who they will reach, and whether the institutions we have are the ones that can deliver them.

These are not preferences that fit neatly into a tax code or a fund prospectus. They are the outlines of a social contract that hasn’t been written yet, but we’ll quickly need to build and deliver on.

This post draws on findings from Global Dialogues Round 8, conducted in collaboration with Windfall Trust. 1,041 participants across 64 countries. Full results, methodology, and open-source data.

This survey serves as a structured global dialogue — not a nationally representative opinion poll. The distinction matters. The survey is one of the first to combine deliberative, interactive methods with global scale–gathering structured public input across a wide range of countries and contexts using a format that allows participants not only to contribute their own views but also to engage with and vote on the ideas of others. — It asked people across a wide range of countries and contexts to reason through a set of genuinely complex questions about AI, wealth, and governance. It did not aim for statistical precision at a country level, but rather to allow us to form an overview of regional patterns and opinions that can help inform future policy conversations.

Participation is limited to those with reliable internet access, recruited through Prolific, and skews young (67% under 35), urban, and digitally engaged. Representativeness is tracked via the Global Representativeness Index and published for each round. Full methodology and limitations are discussed here.